Complete Guide to Object Tracking: Best AI Models, Tools and Methods in 2023

In this ultimate guide and tutorial you will learn what is object tracking and learn how to track objects on your videos with the best models and tools.

Table of Contents

Object tracking is a challenging task especially when it comes to creating custom datasets for tracking. For example, a single one-hour video contains 86400 frames = 60 minutes × 60 seconds in every video × 24 frames per second (FPS) 😱. If you have about 8-12 objects on every frame on average, the total number of objects will be around one million (1M).

![]() Number of frames in a general one-hour video

Number of frames in a general one-hour video

But what if you can place millions of bounding boxes automatically? In this tutorial, we will show you the top AI models and automatic video annotation tools you can use to efficiently track objects on videos. Furthermore, we will address and clarify the existing confusion in the terminology that exists in the field of object tracking nowadays.

Video tutorial

In this video guide, you will learn how to use state-of-the-art models for object tracking inside the video annotation tool to automate manual object tracking.

What is Visual Object Tracking?

Object tracking is a fundamental computer vision task, which aims to predict the position of a given target object on each video frame. This task is used in a wide range of applications in robotics, video surveillance, autonomous cars, human-computer interaction, augmented reality and other fields.

In this tutorial, we will cover all the most popular subtasks in Visual Object Tracking:

-

Single Object Tracking (SOT)

-

Multiple Object Tracking (MOT)

-

Semi-supervised class-agnostic Multiple Object Tracking

-

Video Object Segmentation (VOS)

Let's take a look and explain all of the aforementioned tasks.

What is Single Object Tracking (SOT)?

Single Object Tracking (SOT) is the task of localizing one object on all video frames based on the manual annotation provided on the first frame. The user labels the target object with a bounding box on the first frame and then a neural network finds the object on the next frames. The annotation of a target object is often called a template, and the next frame is called a search area.

Neural networks from this category are trained to track any object using the annotation on the first frame. Such models are called class-agnostic neural networks. Thus, they can be directly used inside the video annotation tool 🚀 to speed up manual labeling by automatically tracking any object.

MixFormer object tracking

CVPR2022 SOTA video object tracking

Watch the demo below, it shows how the best class-agnostic models for single object tracking can be used inside Supervisely Video Labeling Toolbox for manual video labeling automation:

What is Multiple Object Tracking (MOT)?

This is probably the most confusing terminology in the Object Tracking field:

Multiple Object Tracking is not just Single Object Tracking, but for multiple objects.

Traditionally, in the literature, Multi-Object Tracking (MOT) is the task of automatically detecting the objects of the predefined set of classes and estimating their trajectories in videos. It means that such models were trained to detect and track only a specific set of classes. In general, it works the following way: the model automatically detects objects of certain classes on the frames and it is not possible to provide user feedback or correct the model during the process.

It is less common to use this approach inside video labeling tools during the annotation, but it is a typical production scenario where the deployed model predicts object tracks on videos and then some post-processing is applied to extract some insights from the data: for example, count objects of find objects with deviant unusual behavior.

![]() Example of Multi-Object Tracking: model automatically detects and tracks objects on videos

Example of Multi-Object Tracking: model automatically detects and tracks objects on videos

The Multiple Object Tracking process consists of two steps. The first step is to automatically detect the objects on the frame (detection problem). The second step is to combine detections from neighboring frames into tracklets (association problem or re-ID problem).

Some neural networks can solve both detection and association problems end-to-end simultaneously in a shared neural network. Inference speed will be faster, but the main problem with them is that they require labeled videos for training which is hard to obtain quickly. In addition, if you decide to update production requirements and track an additional class, you will have to label it in the entire training dataset. These factors make them difficult to use in production.

It is more common and convenient to use separate neural networks for detection and re-ID problems. It is usually referred to as the Tracking-by-Detection paradigm. Diagram credit.

![]() .Tracking-by-detection paradigm. Firstly, an object detector predicts objects on all video frames. Secondly, a tracking algorithm runs on top of the detections to perform data association, i.e. link the detections to obtain full trajectories.

.Tracking-by-detection paradigm. Firstly, an object detector predicts objects on all video frames. Secondly, a tracking algorithm runs on top of the detections to perform data association, i.e. link the detections to obtain full trajectories.

For example, a detection model just predicts bounding boxes on every frame and then a separate algorithm combines isolated predictions into the instance tracks. This approach has its benefits and drawbacks. It is easier to implement, train and customize. Any part of the pipeline can be easily replaced and improved with the newly released state-of-the-art neural network.

You can train a custom detection model on your custom dataset with labeled images and then use a trained detection model for object tracking on videos. Сheck out how to Train and predict a custom YOLOv8 model in Supervisely without coding in our tutorial.

But the main drawback compared with end-to-end models is that you should use two separate models instead of one and it leads to the decrease in performance - inference speed will be slower because of using two models instead of one.

In the video guide below, you will learn how to do object tracking using YOLOv8 on your videos in a few clicks. You will need two Supervisely Apps. The first one is to run the YOLOv8 model on your computer with GPU, and the second one is to get predictions on every video frame and combine them into tracks using the variation of DeepSort algorithm.

Serve YOLOv8 | v9 | v10 | v11

Deploy YOLOv8 | v9 | v10 | v11 as REST API service

Apply NN to Videos Project

Predictions on every frame are combined with BoT-SORT/DeepSort into tracks automatically

We selected YOLOv8 just for demo, absolutely the same way you can use any detection model integrated into Supervisely including YOLOv5, MMDetection or Detectron2 frameworks.

Semi-Supervised Class-Agnostic Multiple Object Tracking

Why can't we track multiple objects with the same approach as in Single Object Tracking? In academia, this task is not popular, but practically, it is very convenient to perform model-assisted labeling of custom objects (class-agnostic) on the first frame (semi-supervised) and then track them automatically on the next frames.

Let's do the simple procedure — apply SOT model to every object on the first frame. During the process, we will be able to correct tracking predictions and re-track objects. Thus user at the same time can achieve high annotation speed with the AI-assistance and has full control over the labeling quality.

Here is how you can do it:

What is Video Object Segmentation?

Video Object Segmentation is like Single Object Tracking, but with masks. A user has to label the object mask on the first frame and then the model will segment and track the target object on other video frames. Learn everything about modern Video Object Segmentation and how to use corresponding AI automation tools on your custom data in our XMem + Segment Anything: Video Object Segmentation SOTA tutorial.

Conclusion

This complete guide on object tracking covers the main approaches and methods and explains the main differences between different subtasks like single or multiple tracking. In addition, video tutorials show how to use modern AI models and labeling tools with automation to perform object tracking on your custom videos.

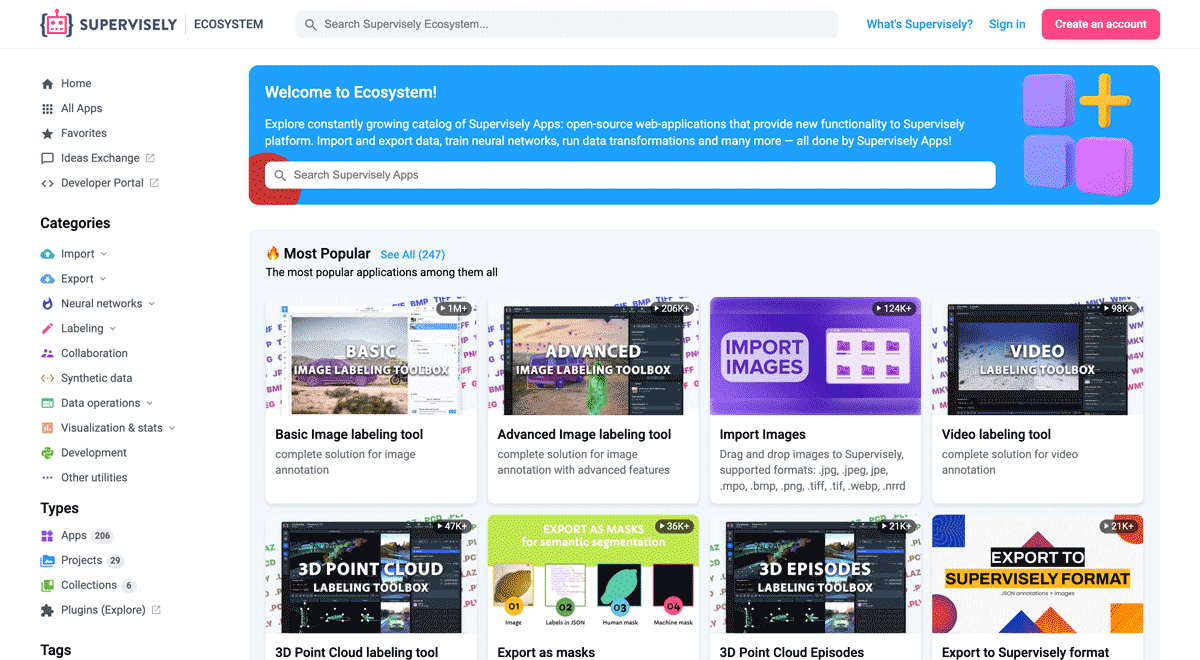

Supervisely for Computer Vision

Supervisely is online and on-premise platform that helps researchers and companies to build computer vision solutions. We cover the entire development pipeline: from data labeling of images, videos and 3D to model training.

The big difference from other products is that Supervisely is built like an OS with countless Supervisely Apps — interactive web-tools running in your browser, yet powered by Python. This allows to integrate all those awesome open-source machine learning tools and neural networks, enhance them with user interface and let everyone run them with a single click.

You can order a demo or try it yourself for free on our Community Edition — no credit card needed!